Michal Štefánik

Welcome to my website! I am a Specially Appointed Researcher at R&D Centre for Large Language Models at the National Institute of Informatics (NII) in Japan, in a team of professor Pontus Stenetorp (NII/University College London). Before, I was a Research Associate at University of Helsinki and NLP Research Lead at Gauss Algorithmic.

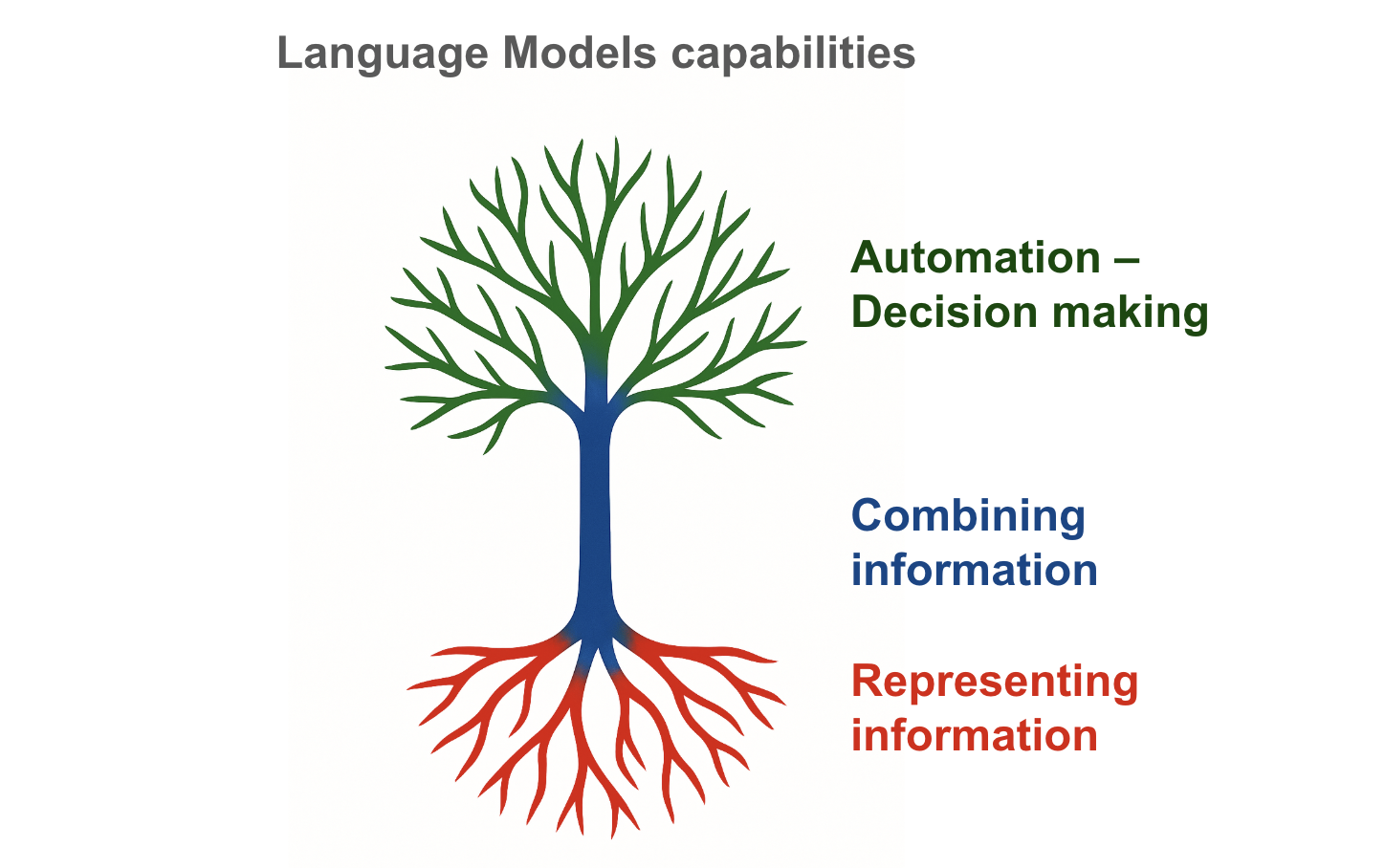

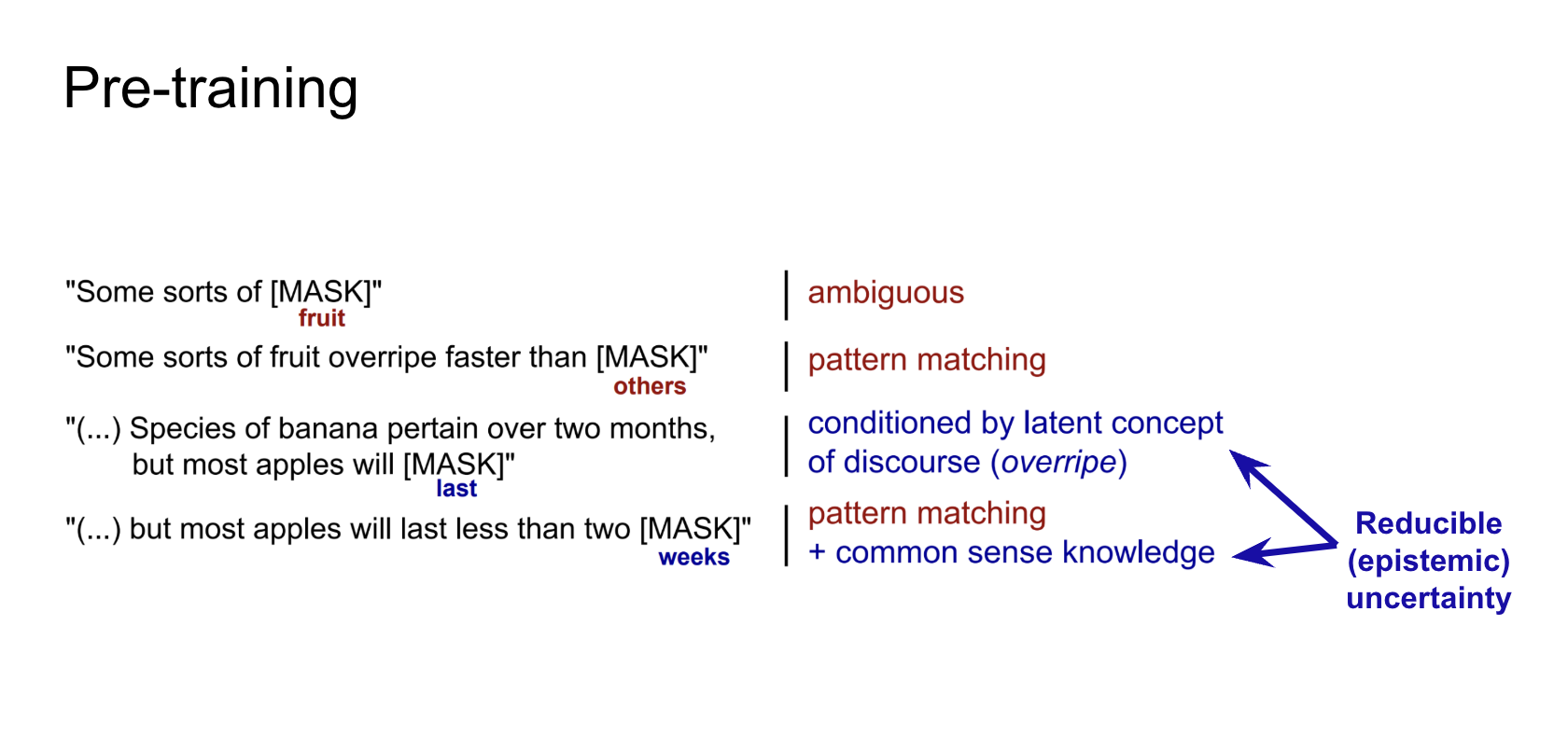

My research mission is to understand intelligent behaviour in language models. This encompasses unravelling the covariates of its emergence, e.g., in properties of their training data or their training objectives, but also the caveats and limitations of our evaluations. One entry point to these questions is mathematical reasoning, where my work studies how language models acquire, represent, and use abstract numeric structure. My previous work, presented at highly selective *ACL conferences, also delivers better models for low-resource applications, reasoning models able to rapidly adapt, or instructional models that are robust to prediction shortcuts. My research was recognised by several awards (see below), e.g. recently by Summa Cum Laude and Prorector's Award for my dissertation thesis.

I am a proud founder and leader of TransformersClub™, a platform for supporting students in pursuing their own research ideas, with our members awarded international prizes and presenting at top-tier NLP/AI conferences.

Over the last six years, I have also led the delivery of many industrial applications of language technologies in Gauss Algorithmic, with successful customer stories in language generation or entity recognition, all the way from the research ideas to the scalable deployments, today serving the fascinating NLP technologies to thousands of users.

Do not hesitate to connect or reach out if our research interests align!